|

|

Post by szaleniec on Jul 2, 2010 19:42:08 GMT -5

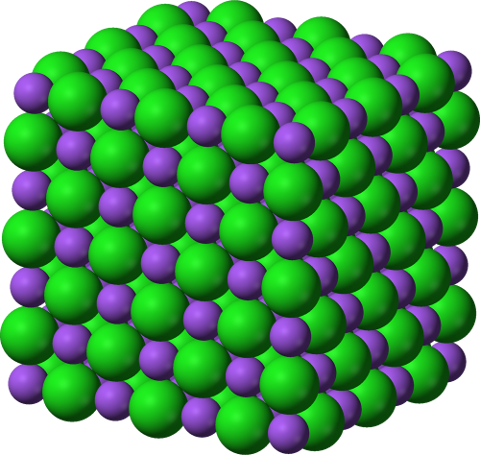

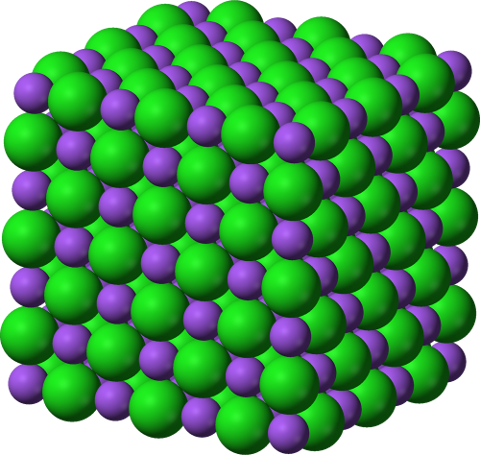

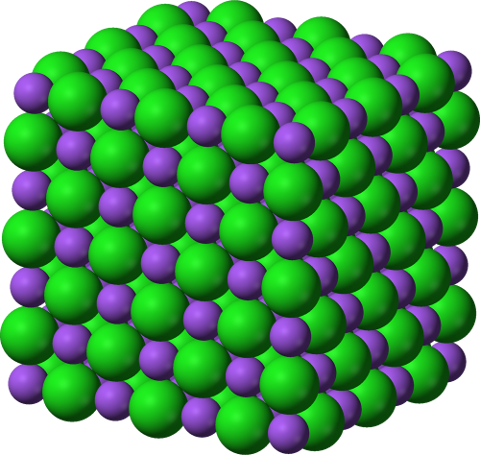

The topic came up in passing on another forum I visit, and I thought it was worth reposting here, of how creationists like to argue that complexity and entropy are negatively correlated. Because complex objects have a lesser entropy, the argument goes, they can't form from simpler objects.* Now consider sodium chloride.  By any reasonable definition of complexity, this is one of the least complex structures in existence. It's a cube, made up from alternating Na + and Cl - ions. So creationist logic would expect a salt crystal to have an extremely large entropy. Unfortunately for them, it doesn't. Quite the opposite, in fact, as with most solids. As entropy** is in proportion to the natural log of the number of accessible microstates***, and solids have a lot fewer of these than liquids or especially gases because the atoms don't move freely, our salt crystal has a very low entropy. So, by the creationists' argument, is a very complex system. Which it quite self-evidently isn't. (* Without an energy input, another factor they ignore.) (** Statistical mechanical entropy, that is. As creationists seem to take the old "entropy = disorder" thing as gospel, and it originates as an oversimplification of the statistical mechanical concept of entropy****, it makes sense to use it here.) (*** In layman's terms, the number of ways in which the components of a system can be arranged such that it has the same macroscopic properties, such as temperature and pressure.) (**** Whoever thought it was a good idea to teach "entropy = disorder" to students with no statistical mechanical background has a lot to answer for.) |

|

|

|

Post by Undecided on Jul 2, 2010 20:19:12 GMT -5

This brings up the interesting issue of how entropy should be taught at an elementary level, especially since disorder has acquired a technical meaning of its own in the context of physics. The idea of entropy as disorder has served its purpose, but perhaps entropy should instead be given as how much extra information is necessary to completely specify the state of the system. As for a definition of complexity, it seems an uncontested one seems lacking: cscs.umich.edu/~crshalizi/notebooks/complexity-measures.html |

|

|

|

Post by szaleniec on Jul 3, 2010 16:10:07 GMT -5

Entropy, unlike other thermodynamical variables like pressure, volume, temperature and internal energy, is tricky to pin down. For an elementary definition, you'd ideally want something that didn't draw from statistical mechanics nor information theory. is a very nice and elegant conceptualisation of entropy, and I might borrow it for my own use  , but ultimately suffers the same issues as entropy = disorder. The problem is that a first-year undergrad faced with dQ=T.dS for the first time will wonder how on Earth we get the idea that S is the disorder of the system. It all seems completely arbitrary, pulled out of thin air in a (dare I say) disorderly fashion. We need a description of the entropy that doesn't assume any knowledge that the student won't have. The conceptualisation I favour for entry-level students is one derived from the basic principles of thermodynamics: that the entropy measures the unavailability of a system's internal energy to perform work. |

|

sonickid01

Full Member

DO THE RIGHT THING

DO THE RIGHT THING

Posts: 174

|

Post by sonickid01 on Jul 3, 2010 16:39:51 GMT -5

The reason entropy is given as disorder is because it's remarkably simpler than the details. One way I've heard it put is the amount of different classifications, and the more separated out something is into separate components or classes, the lower the entropy. Example, a salt and pepper shaker are relatively well organized, and have low entropy as two separately definable components. A thorough mixture of the two will have only one class of substance, the salt + pepper mixture.

I do not know how accurate this is, so halp if I'm wrong please.

|

|

|

|

Post by Admiral Lithp on Jul 3, 2010 18:00:40 GMT -5

You know, you'd think high school would have covered entropy somewhere, but I have no idea what you guys are going on about.

|

|

|

|

Post by szaleniec on Jul 3, 2010 18:41:36 GMT -5

The reason entropy is given as disorder is because it's remarkably simpler than the details. One way I've heard it put is the amount of different classifications, and the more separated out something is into separate components or classes, the lower the entropy. Example, a salt and pepper shaker are relatively well organized, and have low entropy as two separately definable components. A thorough mixture of the two will have only one class of substance, the salt + pepper mixture. I do not know how accurate this is, so halp if I'm wrong please. Kind-of sort-of. In statistical mechanics, the entropy is defined as being proportional to the natural log of the number of accessible microstates, which (approximately) means the number of ways in which the components of a system can be arranged such that it has the same bulk properties. The entropy = disorder thing is indeed simple to explain. That's not my problem with it. The problem is that it's a non-sequitur in the context of introductory thermodynamics. Nothing about the basic definition of entropy suggests that it's got anything to do with the organisation of the system; you need statistical mechanics to draw that conclusion. You know, you'd think high school would have covered entropy somewhere, but I have no idea what you guys are going on about. It's undergrad stuff. If you do a physics or chemistry degree, it'll probably show up in the first year. |

|

sonickid01

Full Member

DO THE RIGHT THING

DO THE RIGHT THING

Posts: 174

|

Post by sonickid01 on Jul 3, 2010 19:17:43 GMT -5

You know, you'd think high school would have covered entropy somewhere, but I have no idea what you guys are going on about. xD No one in high school knows. Our chemistry teacher spent a day on the subject and later on in the year we were playing Hangman with chemistry words. I decided on "entropy" and they guessed up to "ENT_OPY" and couldn't get it within 5 tries. "Entyopy! Entsulphy! Egg salad! Entcolpy!" Nobody could define it when they guessed it either, even though the teacher had specifically explained the disorder definition when he taught it. Lolwut. I'm not following here, to be honest. Is the disorder thing the basic definition, or the definition related to microstates the more basic one? Maybe I'm just putting the easier one because it's simpler. Also when I studied physics, thermodynamics defined entropy as related to heat content and the distribution of heat. The second law of thermodynamics has been put as the tendency to increasing entropy, but I've also read it as the distribution of heat from hot to cold areas, but I thought this had more to do with enthalpy than entropy although I'm probably just not getting it. At what level of analysis is entropy equal to movement of heat, and for that matter can all the versions of the second law of thermodynamics (cause I believe there's more than just a few) be shown to be equivalent? |

|

|

|

Post by szaleniec on Jul 3, 2010 19:54:26 GMT -5

Lolwut. I'm not following here, to be honest. Is the disorder thing the basic definition, or the definition related to microstates the more basic one? Maybe I'm just putting the easier one because it's simpler. The basic definition of entropy is that it's the extensive variable in the heat transfer differential, the S term of dQ=T.dS. The definition related to microstates comes from statistical mechanics rather than classical thermodynamics, and the disorder thing is a simplification of that concept. It doesn't make sense to talk about entropy as disorder without some grounding in statistical mechanics. For one thing, going "the S term represents the disorder of the system" without any context is just confusing. For another, you end up with people blindly assuming that entropy is disorder without any idea of when the analogy actually works. These definitions are equivalent. A transfer of heat from a hot area to a cold area is always accompanied by an increase in entropy. Enthalpy does have to do with heat transfer* but not with the second law. (* The change in enthalpy is equivalent to the heat transferred to a system at constant pressure, which is why chemical heats of reaction are typically given in terms of enthalpy because it's easier to measure a system at constant pressure than constant volume.) |

|

sonickid01

Full Member

DO THE RIGHT THING

DO THE RIGHT THING

Posts: 174

|

Post by sonickid01 on Jul 4, 2010 10:24:58 GMT -5

Since I am a basic high school kid, I won't ask about the differential equation  . Now in thermodynamics, I remember in basic Chemistry last year being taught that it was a factor in whether reactions could occur spontaneously or not (ie, it's part of the formula formula for Gibb's Free Energy, I think G = H - S or S - H, but I'm thinking the first one). However the teacher didn't give a lot of explanation as to what exactly it is other than "disorder," but as you said it doesn't make sense without statistical mechanics. According to Wikipedia, "Entropy is as such a function of a system's tendency towards spontaneous change," but this definition is cloudy from the beginning. Entropy always increases, but wouldn't something with high entropy (very even distribution with low amount of energy available to do work) have low ability to make spontaneous change, or is my understanding wrong? I asked my physics teacher about entropy during the year and he said that to some degree entropy has to do with waste heat, which is kind of "spread thin" throughout molecules in the heat sink so it's no longer usable. My next question is at what point are the thermodynamic definition and the statistical mechanics definition of entropy equivalent, or are they? I don't see how disorder directly relates to heat and the ability to do energy. The reason I'm asking these questions is because if the foundation of the concepts makes sense, then all the other results that come out of it will be perfectly explainable and therefore intuitively logical so I'll remember it better. To have a reason why thermodynamics = statistical mechanics or why they aren't will help me remember that the two equal or not equal. Edit: Old Viking-there you go. I couldn't remember that definition for some reason, I felt ridiculous that I couldn't put it that simply even though I'd learned it. So the energy unable to do work. |

|

|

|

Post by Old Viking on Jul 4, 2010 15:35:56 GMT -5

Entropy is the amount of energy in a system that is no longer available to do mechanical work. I, for example, am extremely entropic.

|

|

|

|

Post by tolpuddlemartyr on Jul 4, 2010 18:41:18 GMT -5

|

|

|

|

Post by Yla on Jul 5, 2010 7:54:53 GMT -5

Entropy is the amount of energy in a system that is no longer available to do mechanical work. I, for example, am extremely entropic. No, that's Anergy. The way I relate the statistical and thermodynamic use of Entropy: We need a spatial temperature gradient to do work. So when the energy is distributed equally in our system, we have high entropy as in disorder, and we can't do any work either. When we split our system down the middle, one part glowing hot, the other absolute zero, it's very much more ordered, and it's usable. Disbelieving comment: I can't believe I took Engineering Thermodynamics for 2 semesters just to answer a forum post. |

|

|

|

Post by Mira on Jul 5, 2010 19:14:56 GMT -5

You know, you'd think high school would have covered entropy somewhere, but I have no idea what you guys are going on about. Really? I've known the basic definition of entropy for a long while. Of course, the majority of my education has been outside of school and I've never taken a chemistry or physics class. |

|

, but ultimately suffers the same issues as entropy = disorder. The problem is that a first-year undergrad faced with dQ=T.dS for the first time will wonder how on Earth we get the idea that S is the disorder of the system. It all seems completely arbitrary, pulled out of thin air in a (dare I say) disorderly fashion. We need a description of the entropy that doesn't assume any knowledge that the student won't have.

, but ultimately suffers the same issues as entropy = disorder. The problem is that a first-year undergrad faced with dQ=T.dS for the first time will wonder how on Earth we get the idea that S is the disorder of the system. It all seems completely arbitrary, pulled out of thin air in a (dare I say) disorderly fashion. We need a description of the entropy that doesn't assume any knowledge that the student won't have.

.

.